Custom LLM or

OpenAI API?

Custom LLM vs OpenAI API — understand the real tradeoffs of cost, latency, data privacy, and capability before making the call.

Most teams default to the OpenAI API because it is fast to integrate and the results are impressive out of the box. That is often the right call. But there are situations where a custom model or a fine-tuned alternative is worth the extra work.

Here is how to think through it.

The Core Tradeoffs

| Factor | OpenAI API | Custom / Fine-tuned LLM |

|---|---|---|

| Time to first working version | Days | Weeks to months |

| Cost at low volume | Low | High (training + infra) |

| Cost at high volume | Scales with tokens | Fixed infra cost |

| Data privacy | Data leaves your servers | Stays on your infra |

| Control over model behaviour | Limited | Full |

| Customisation for domain | Prompt engineering only | Deep, via fine-tuning |

| Latency | Dependent on OpenAI uptime | Controlled by you |

| Best for | Fast prototyping, general tasks | Regulated industries, very specific domains |

Use the OpenAI API when

- You are building a general assistant, content tool, or chatbot where GPT-4 quality is good enough.

- You need to ship fast and validate before investing in custom model work.

- Your volume is low enough that token costs are manageable.

- Your data is not sensitive enough to require on-premises processing.

Consider a custom or fine-tuned model when

- You are in healthcare, legal, or finance and data cannot leave your environment.

- You need a model that behaves consistently in a very narrow domain (specific terminology, formats, edge cases).

- Your production volume is high enough that token costs are becoming a line item.

- You want to reduce latency or eliminate dependency on a third-party API SLA.

The middle path most teams actually end up on

Most production AI systems are neither a raw OpenAI API call nor a fully custom model built from scratch. They sit somewhere in between. Retrieval augmented generation (RAG) lets you use a powerful base model while keeping responses grounded in your proprietary data. Fine-tuning lets you adapt an existing model to your domain without training from scratch. These approaches are often faster to ship than a fully custom model and cheaper to run than raw GPT-4 at high volume. Understanding where on the spectrum your use case actually sits is usually the most useful first conversation to have before committing to either extreme.

Questions worth working through with your team before you commit

- What is your projected token volume at six months? At what point does that cost become a real concern?

- Does any of the data flowing through the model contain personal or regulated information?

- How narrow is the task? The narrower it is, the more a fine-tuned model will outperform a general one.

- How much does it matter if the model is unavailable for 30 minutes? If uptime is critical, you want control over the stack.

- What does acceptable performance look like? If GPT-4 already hits 90 percent of what you need, the last 10 percent might not be worth the engineering cost.

Not sure which approach fits your project?

Describe what you are building and where you are in the process. We will tell you which direction makes more sense and why.

Have a Project in Mind?

Let's Talk.

Tell us what you are building and we will come back within one business day with a plan, a timeline, and an honest cost estimate.

Ideas, Guides, and

Industry Perspectives

How Much Does AI Development Cost in India? (2026 Guide)

A complete breakdown of AI development costs in India in 2026. Chatbots, ML models, LLM integration, and full AI products.…

Read Article

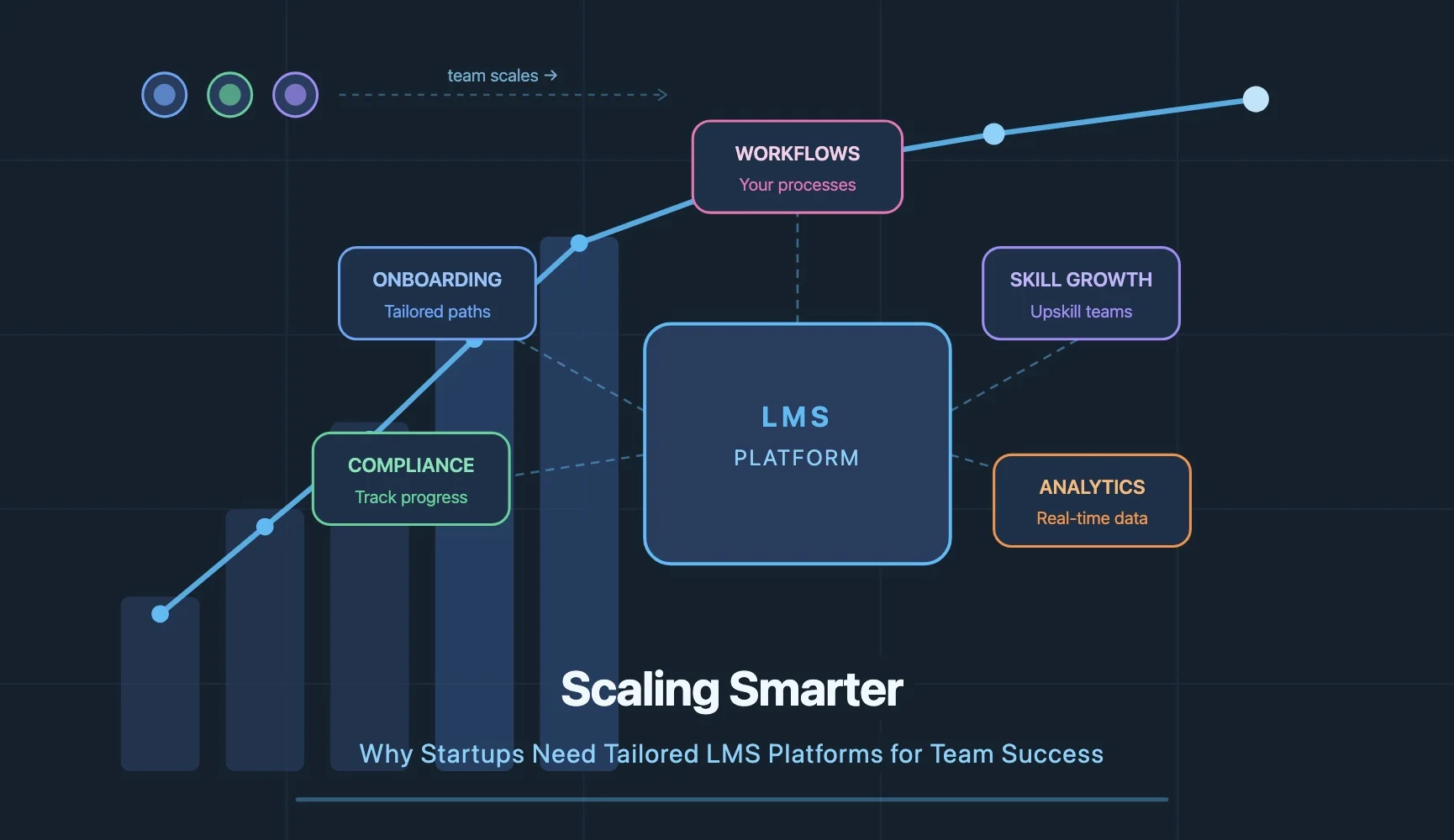

Scaling Smarter: Why Startups Need Tailored LMS Platforms for Team Success

Most startups do not have a training problem. They have a scaling problem. A tailored LMS built around your actual…

Read Article

How AI Development Can Transform Your Business in 2026

AI development is transforming how businesses operate in 2026. Five areas creating real value: process automation, smarter decisions, personalisation at…

Read ArticleLet's Talk About

Your Project

Have a question or ready to start? Drop us a message and we'll get back to you within one business day.

A118, Sector 63

Noida, UP 201301

304 Krishna Classic, A.B Road

Indore, MP 452008